Here at GeekExtreme, we love digging into the technical specs of new tech, and I recently discovered something fascinating while reviewing server traffic logs. A company that holds the #1 organic spot for a core industry term was invisible when I fed that exact same query into ChatGPT. The traditional Google search results gave them the crown, but ChatGPT skipped them entirely. We have spent years tracking backlink profiles and fine-tuning SEO on-page metrics, but that legacy architecture is breaking. Right now, optimizing purely for a URL’s organic rank to boost your click-through rate, without establishing clear entity trust across the broader web, is a strategic dead end.

Instead of asking who has the oldest domain or the most backlinks, AI models look for a pattern of independent agreement. That creates a totally different dynamic where brand mentions woven into synthesized responses heavily outweigh isolated legacy pages. You need to audit your brand’s presence in conversational answers to determine whether high traditional keyword rankings are translating to LLM inclusion. Understanding why top-ranking content gets skipped begins with realizing how AI evaluates trust—not through web links, but through independent consensus.

Key Takeaways

AI systems pull nearly 48% of their citations from high-trust user-generated platforms like Reddit and G2, bypassing traditional backlink economies.

Achieving visibility across conversational engines requires adopting the FSA Framework to restructure flowing prose into standalone, tightly structured facts.

Forcing an AI model to recognize your specific entity positioning requires identical messaging across multiple third-party domains over a 60-90 day window.

Table of Contents

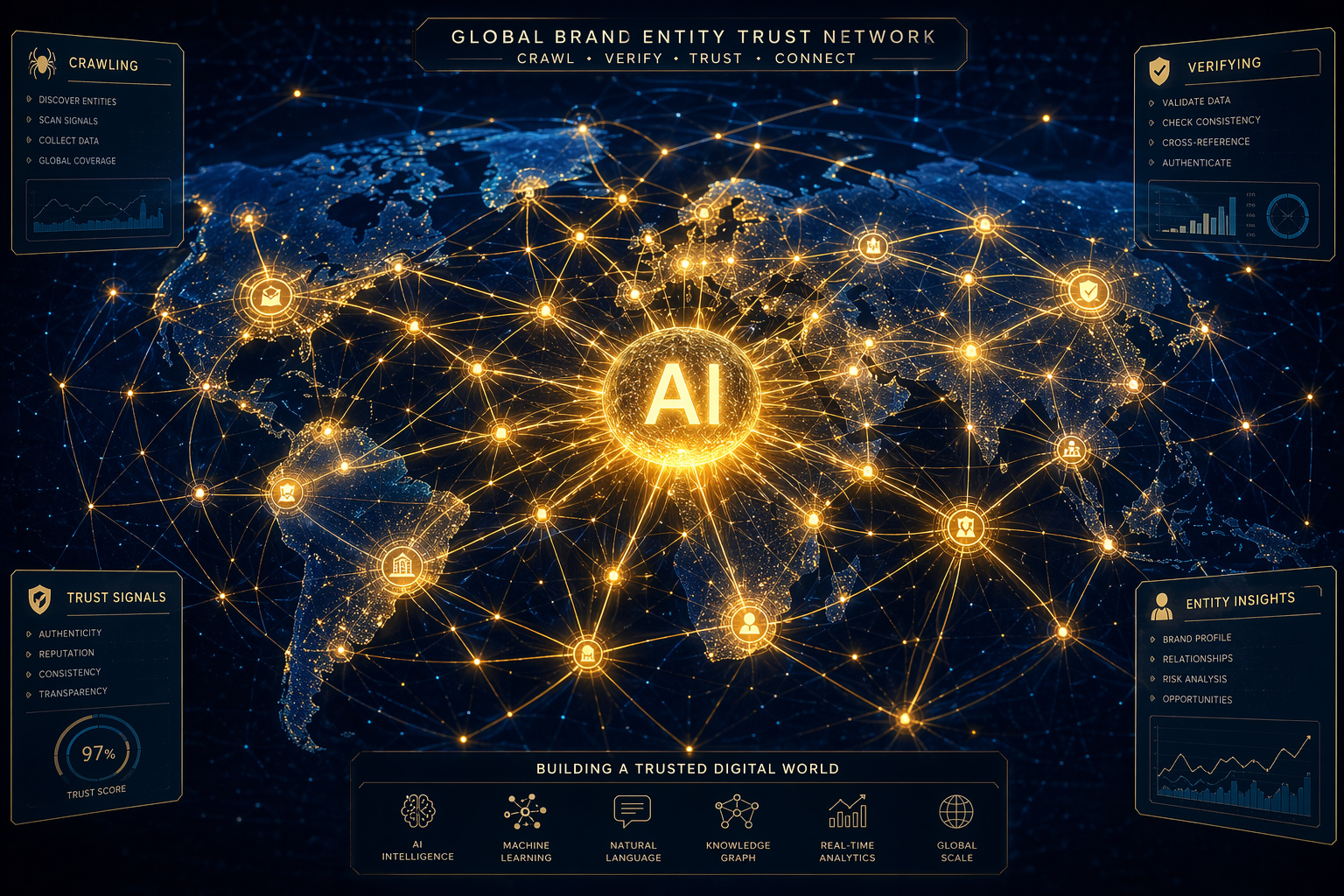

Entity Consensus and User-generated Trust Signals

AI models build trust by checking whether multiple independent websites describe your brand using the exact same terms. They weigh convergence from outside voices far more heavily than bold claims hosted on your own domain. If your site code claims you have the best database infrastructure, the model using AI Sentiment Analysis correctly flags that as a biased signal. But if five unrelated forums connect your brand to that exact database solution, that establishes a pattern the LLM can cite with confidence. This shifts the focus toward entity clarity, which only crystalizes when independent sources reflect identical problem-solving descriptions back to the crawler.

It is an elegant inversion of the old web. Nearly 48% of AI citations originate from high-trust user-generated content because these platforms function as independent verification layers. This makes community management a direct search strategy, meaning a post in a Reddit thread now feeds the consensus mechanism more effectively than an isolated blog post on your own site. Agencies like Taktical Digital, and folks working on platforms like SuperGEO, already treat visibility as a distribution problem, a strategy frequently reflected in how SEO agencies use Semrush. They found that moderate content echoed across external domains beats deeply authoritative content trapped on a single proprietary site.

“AI models build trust by checking whether multiple independent websites describe your brand using the exact same terms.”

To adapt, establish active, consistent messaging across 3-5 niche third-party domains to create the multi-site consensus pattern that LLMs require to confidently cite a brand. Yet, while mastering AI search is now a prerequisite for both enterprise content and successful technical blogging, a consensus strategy that dominates one interface might fail on another, because not all AI engines crawl the web the same way.

LLM Retrieval Bias Across Indexing Engines

Optimizing generically for “AI search” is a mistake because different web-crawling architectures drive each platform. An LLM’s retrieval bias is tightly coupled with its platform maturity, meaning the rules for inclusion fracture depending on the interface a user happens to launch. You have to understand the mechanics of how these engines index the web. Identify which specific retrieval ecosystem governs the majority of high-intent queries in your niche—academic versus generative versus traditional—before uniformly overhauling site architecture.

The Conversational Synthesis Model

Some models prioritize a highly conversational, user-friendly synthesis. Microsoft’s Bing index heavily drives this architecture, which is highly visible to users querying natively from the Edge browser. When users ask questions here or via ChatGPT Search, the system prefers sources that present broad, easily digestible explanations that map cleanly to standard conversational follow-ups.

Strict Freshness and Academic Citation

Other engines operate with a different logic when blending AI and web search. Perplexity AI runs on a strict freshness bias and provides numbered academic citations in its AI-generated output. It actively hunts for dense data, fresh primary research, and empirical backing over friendly conversational tone.

Traditional Authority and SERP Integration

Legacy search powers the rest of the landscape. Engines like Google AI Overviews and Google Gemini continue to rely deeply on existing organic SERP authority. They index the web using traditional E-E-A-T signals, meaning a strong historical backlink profile still dictates what synthesized overview appears over the blue links.

FSA Framework for Content Extraction and Structuring

AI models ignore flowing narrative prose in favor of highly structured, standalone factual statements they can easily parse. LLMs do not read text for nuance or subtext; they run extraction commands looking for clear, isolated concepts. The core blueprint here is the FSA Framework (Freshness, Structure, and Authority), which dictates exactly how easily AI models can package and cite an insight.

Convert broad, long-form explanations on core landing pages into rigidly formatted, schema-marked definition blocks that can survive isolated extraction. By organizing data into distinct definition blocks, you provide the clean, extractable content that an AI bot needs to synthesize a coherent answer. Tying the FSA Framework to valid schema markup ensures the parser maps the facts directly to your organization. However, easily extracted definitions only build trust if that same rigid language is recognized elsewhere across the web.

Cross-platform Alignment for Brand Entity Recognition

Recognizing your brand requires AI models to see identical terminology and value propositions across professional networks, review profiles, and guest posts. You have to actively decouple your brand’s core identity from its own website. If an LLM reads a product feature list or pricing details on your root domain, it checks third-party systems like Reddit, G2, and Bing for verification. Synchronize the exact phrasing of your entity’s core problem-solving capability across review sites, professional profiles, and external mentions to force exact entity recognition.

“Synchronize the exact phrasing of your entity’s core problem-solving capability across review sites and professional profiles.”

I have seen teams using tools like SearchTides test this approach, observing distinct visibility shifts within a 60-90 day window. You log into LinkedIn, update your company bio to a value proposition statement, mirror that exact text on G2, and then distribute the identical phrasing in guest technical articles to influence the crawler. The goal is controlling the citation context so the model views your software as an independent fact rather than an unverified claim, which clears the path for primary source placement.

Citation Rate Tracking and ROI Measurement

Traditional rank tracking is obsolete for AI measurement; success now depends largely on your Citation Rate and Competitor Share of Voice. Getting the #1 blue link no longer guarantees users actually see your link when a chatbot synthesizes its answer.

A healthy citation rate—often defined as scoring over 60% visibility on core topics—indicates strong systematic trust, while your share of voice tells you exactly how much of the broader synthetic conversation you own versus a rival. You also have to consider Sentiment Analysis metrics within this pipeline. An AI mention harms you if the model consistently synthesizes the brand in a limiting or negative context.

Establish a baseline citation-rate metric for your top 20 revenue-driving queries using free baseline dashboards before purchasing enterprise API-tracking solutions. You can pull an initial read natively from Bing Webmaster Tools and its integrated AI Performance dashboard to track your click through rates on AI-generated answers. As you build a real workflow, automated alert systems like Frase and MentionDesk become necessary to continuously test queries, allowing you to catch visibility shifts without manually prompting browser interfaces all day long.

Why does my website rank #1 on Google but get ignored by ChatGPT?

Google and ChatGPT use fundamentally different trust systems. While Google prioritizes legacy metrics like backlinks and domain age, AI models look for ‘independent agreement’ across the web. If your brand lacks consistent mentions on external, third-party platforms, the AI will ignore your site because it perceives your own content as biased.

How much does community management impact my AI search visibility?

It is now a critical search strategy, as nearly 48% of AI citations originate from high-trust user-generated platforms like Reddit and G2. Because AI models treat these sites as independent verification layers, a post in a community thread can build more consensus-based trust than an isolated blog post on your own domain.

What is the FSA Framework and how do I use it?

The FSA Framework—Freshness, Structure, and Authority—is a method for restructuring your content so AI models can easily parse it. You convert flowing narrative prose into rigid, schema-marked definition blocks that act as standalone, extractable facts for the AI to synthesize into answers.

How long does it take for AI models to recognize my brand entity?

Forcing an AI model to recognize and verify your specific positioning typically takes between 60 and 90 days. During this window, you must ensure that identical messaging regarding your problem-solving capabilities appears consistently across multiple third-party domains.

What is the difference between how Perplexity AI and ChatGPT index the web?

Their retrieval biases are governed by different architectures. Perplexity AI operates with a strict freshness and academic citation bias, prioritizing primary research and empirical data, whereas models like ChatGPT and Bing favor conversational, easily digestible synthesis that maps to user-friendly follow-ups.

Is traditional SEO still worth the effort in an AI-driven landscape?

Traditional SEO remains relevant for platforms that still rely on E-E-A-T signals, like Google’s AI Overviews. However, if your strategy ignores ‘citation rates’ and ‘cross-platform consensus,’ you will likely remain invisible in emerging generative search interfaces regardless of your legacy rank.

Can I trust my traditional rank tracking tools to measure AI performance?

No, traditional rank tracking is effectively obsolete for AI search. You need to shift your focus to ‘Citation Rate’ and ‘Share of Voice’ to see how often your brand is included in synthetic answers, using tools like Bing Webmaster Tools or automated alert systems to catch visibility shifts.