There are a million AI video tools these days, but the primary friction for content creators isn’t finding a new filter. It is the exhaustion of bouncing between complex video editing software just to execute basic enhancements. This Vmake review examines whether a single browser tab genuinely eliminates that context-switching nightmare. Designed for high-volume social content pipelines, Vmake integrates functionality from dozens of specialized applications into one package—acting as a comprehensive video enhancer to streamline everything from audio repair to video formatting.

Most industry discussions frame artificial intelligence strictly around its generative capabilities, obsessing over how aggressively a tool can alter reality. But for practical production workflows, the real adversary is operational drag. Moving a single clip through a standalone audio scrubber, passing it to an upscale application, and finally importing it into an editor just to add captions is a massive waste of cycles. The average software review ignores this invisible tax on your time. Escaping that bloated, disconnected pipeline is the core utility being offered here.

You might be wondering if a browser-based computational tool can actually replace dedicated desktop hardware. The truth lies entirely in how you define your editing requirements.

Table of Contents

Vmake review: consolidating the creator app stack

Replacing a multi-tool editing stack requires ruthlessly prioritizing speed over infinite control. Traditional non-linear editors grant users granular authority over every pixel and waveform, but that power demands high-end local GPUs and constant software updates.

Vmake operates on a completely different premise. Web-based video processing pushes the heavy computational load off your local machine and onto remote servers, effectively bypassing your local hardware bottlenecks. The dashboard replaces the traditional timeline with a series of automated, single-purpose utilities. You are trading the deep, manual slider adjustments found in tools like Premiere Pro for immediate, algorithmic execution.

This workflow consolidation is where the software demonstrates its actual worth to non-technical operators. Instead of hunting for stock media, auxiliary features like Vmake’s AI Video Generation let users conjure B-roll out of thin air using simple text prompts. This keeps them anchored inside the exact same tab where they process their primary footage. Whether your goal is to utilize a video watermark remover for cleaner assets or to generate new backgrounds, you log in, upload the file, and execute multiple enhancements without ever touching a local directory.

But a sleek interface means nothing if the resulting export is unusable. When filming conditions fall apart on location, algorithmic processing transitions from a neat convenience into a strict necessity.

Treating AI as a salvage operation

The most practical way to view this tier of software is not as a creative suite, but as a salvage operation. The system is fundamentally built to rescue flawed footage so you do not have to endure costly reshoots.

Repairing imperfect environments

Real-world recording conditions are rarely ideal. Vmake deploys noise reduction heavily targeted at imperfect environments, mechanically isolating human vocal frequencies from the chaotic interference of passing street traffic or poor microphone connections.

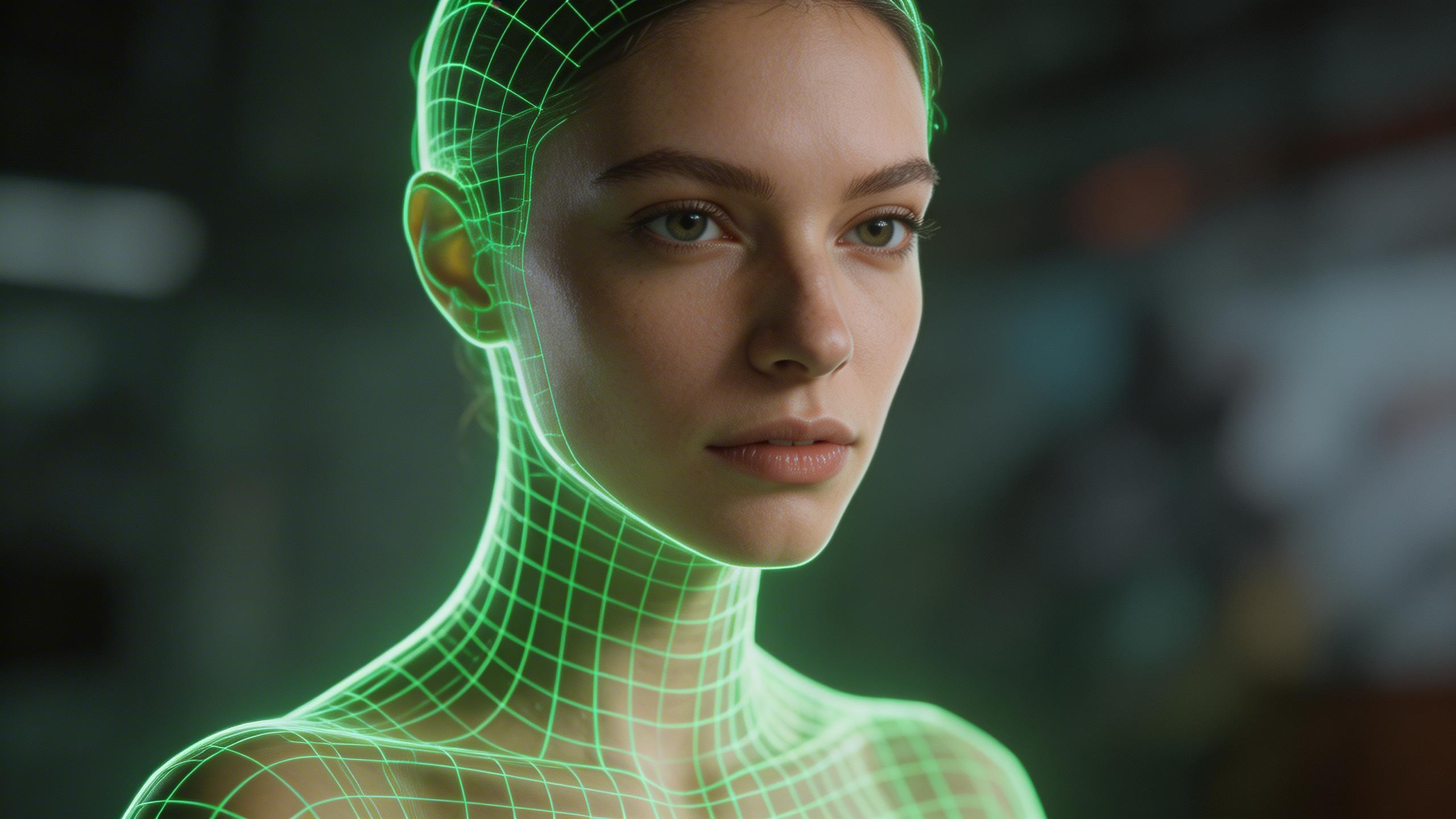

Its visual repair capabilities operate on similar isolation principles. The system executes frame-by-frame analysis to force subject separation, bypassing the historical requirement for physical green screens and allowing immediate background replacement right on the raw footage.

When dealing with appropriated or platform-stamped content, the software utilizes algorithmic reconstruction to erase moving watermarks. Here is the step-by-step processing sequence the engine follows to execute this: 1. The algorithm scans the initial frame to identify the watermark’s block boundary. 2. It tracks that specific coordinate block across every subsequent frame. 3. The engine continuously samples surrounding pixels to seamlessly fill and reconstruct the background data as the overlay shifts.

The limits of detail generation

A persistent misconception in modern content production is that AI renders set conditions irrelevant. It does not. The unbreachable rule of digital video processing is that source quality dictates the ceiling for detail generation.

“The unbreachable rule of digital video processing is that source quality dictates the ceiling for detail generation.”

The engine works by mathematically extrapolating from existing pixels. If a video is fundamentally destroyed by profound digital artifacting or zero-light conditions, the algorithm cannot simply hallucinate high-resolution data out of the void. Enhancement works brilliantly to salvage a slightly underexposed clip, but treating the software as a license to shoot garbage footage will yield heavily degraded, synthetic-looking outputs.

Once the asset is successfully stabilized on a technical level, the operational focus immediately shifts to how that video performs in the chaotic, algorithmic feed.

Technical edits as automated growth levers

Technical formatting steps like transcription and graphic design are often treated as tedious afterthoughts. Inside a high-volume pipeline, however, they are strictly operational growth levers designed to hack audience retention.

Hacking silent mobile scrolling

According to Vmake’s internal benchmarks, social media videos equipped with captions secure 80% more baseline engagement. The platform automates transcription to output perfectly synced, burned-in captions that consistently capture the attention of audiences engaged in silent mobile scrolling.

There is a fundamental difference in how distribution platforms handle text. Relying on an SRT export means crossing your fingers and trusting the native player to render your subtitle track gracefully—which it rarely does without squashing your text behind UI elements. Delivering a file with burned-in text guarantees your typography, timing, and brand aesthetics survive the upload process completely intact.

Mood analysis and thumbnail generation

The system makes an interesting semantic leap with its visual packaging tools. It executes mood analysis on the raw video file to drive automated thumbnail generation, attempting to read the contextual “vibe” of the asset and map it to a bespoke title card.

Users gain access to over eighteen dynamic templates meticulously calibrated to the algorithmic preferences of TikTok and Instagram Reels. Assets optimized here are functionally primed for rapid distribution across diverse ecosystems—from vertical YouTube Shorts to targeted ad placements on visually-driven networks like Pinterest or community-focused boards like ReddIt.

However, relying entirely on remote servers to drive this kind of massive engagement brings its own set of logistical hurdles that live just beneath the clean user interface.

The hidden cloud constraints of web AI

The “limitless” narrative surrounding web-based artificial intelligence immediately collapses when you examine the underlying economics. SaaS video processing runs on wildly expensive remote hardware, and those operational taxes are silently passed down to the user.

Credit consumption and the 4K trap

There is a direct, inverse relationship between aggressive rendering targets and your account balance. The platform operates on a token system, creating a strict micro-economy around credit consumption that heavily penalizes default 4K upscaling.

Pushing standard 1080p footage to an artificial 4K resolution burns through processing credits rapidly. Unless you are exporting for a massive monitor display, this is largely an economic trap. Imposing the rendering tax of 4K output on an audience that will invariably watch a highly compressed stream on a six-inch phone screen is a fundamental misallocation of your cloud resources.

Navigating browser-based limits

Heavy video assets naturally introduce friction in any web application. The platform enforces a hard 200MB upload capacity. This file size ceiling means you are constantly negotiating browser-based limits before editing even begins. High-volume shooters hitting that cap will be forced to route their heavy, raw files through a third-party application or desktop compressor tool prior to upload, which introduces a slight detour into the promised “one-stop” workflow.

Furthermore, while the algorithmic transcription is highly effective, it possesses a distinct 95% accuracy ceiling. Background noise or complex industry jargon will cause the transcription engine to stumble, permanently requiring human verification before you safely hit export.

GDPR compliance and data retention

There is an inherent anxiety in uploading proprietary organizational footage to consumer-grade cloud servers. To mitigate this “black box” processing risk, Vmake enforces strict data retention duration limits, wiping uploaded media from its infrastructure after exactly seven days.

This deletion protocol ensures baseline GDPR compliance and provides a measurable, predictable layer of data governance. Still, standard security hygiene applies: uploading a quick, non-sensitive clip sent by a client via WhatsApp is perfectly fine for social processing, but highly classified internal corporate communications should remain exclusively on local, air-gapped hardware.

With both the strategic advantages and the heavy cloud constraints laid bare, whether this platform fits your specific production workflow comes down to basic math.

Calculating the true ROI for creators

Vmake is engineered specifically for non-technical, high-volume social creators. It is practically useless for cinematic perfectionists seeking to tweak color grades on a microscopic level.

Its true return on investment has very little to do with raw feature count. The ultimate value of the platform is dictated by the actual credit cost per feature when compared directly against the time you currently hemorrhage exporting files between legacy desktop apps.

Audit your current application subscriptions. Measure the sheer latency of moving a single file through your favorite audio scrubber, caption generator, and editing suite. If eliminating that context-switching fatigue saves you multiple production hours a week, consolidating your operations into a single browser tab is a highly logical pivot.

Frequently Asked Questions

What is the actual difference between Vmake and a traditional editor like Premiere Pro?

Vmake trades granular, non-linear timeline control for automated algorithmic speed. Rather than constantly tweaking manual sliders on a local machine, you use their remote servers to execute single-purpose actions like audio repair or background removal. It is built strictly for high-volume social media output, not cinematic perfectionism.

How does Vmake’s watermark removal actually process moving video?

The AI engine identifies the watermark’s specific coordinate block on the initial frame and systematically tracks it throughout the clip. As the overlay shifts across the screen, the algorithm continuously samples the surrounding pixels to paint over the watermark and flawlessly reconstruct the missing background data.

Can I use the AI to completely restore dark or highly corrupted footage?

No, because the unbreachable rule of digital editing is that source quality dictates the ceiling for AI generation. The software works brilliantly to salvage slightly underexposed clips, but it cannot hallucinate high-resolution data out of absolute darkness without making your export look synthetic and heavily distorted.

Why does the platform penalize 4K video upscaling?

Web-based artificial intelligence relies on wildly expensive remote cloud servers, and Vmake passes those compute costs to users via a credit-based token economy. Forcing 1080p footage to an artificial 4K resolution burns through those credits immediately, which is exclusively a financial trap if your viewers are watching compressed videos on cheap mobile screens.

How much raw video can I actually upload at once?

The browser interface enforces a strict, hard cap of 200MB per upload. High-volume shooters routinely hitting this media ceiling will unfortunately be forced to bounce their heavy videos through a desktop compressor first, adding a frustrating detour to the promised workflow.

Why does Vmake hard-code captions into the video instead of exporting traditional SRT files?

Exporting a standalone SRT file means blindly trusting a social platform’s native video player to render subtitles properly, which usually results in text getting squashed behind random UI elements. Burned-in captions guarantee your exact timing, typography, and brand aesthetics survive the upload process completely intact to capture silent scrollers.

Is it completely safe to upload unreleased client footage to these remote AI servers?

The platform provides baseline GDPR compliance by systematically wiping all uploaded media from their servers after exactly seven days. While this security hygiene is perfectly fine for standard marketing clips, highly sensitive corporate communications should absolutely remain isolated on local, air-gapped editing hardware.